Ping Monitoring

What Is Ping?

Ping is a network administration tool used to test the reachability of a host on an Internet Protocol (IP) network. It works by sending Internet Control Message Protocol (ICMP) Echo Request packets to the target host and waiting for a response. This basic utility is fundamental in diagnosing network connections and performance issues, making it a critical tool for IT professionals.

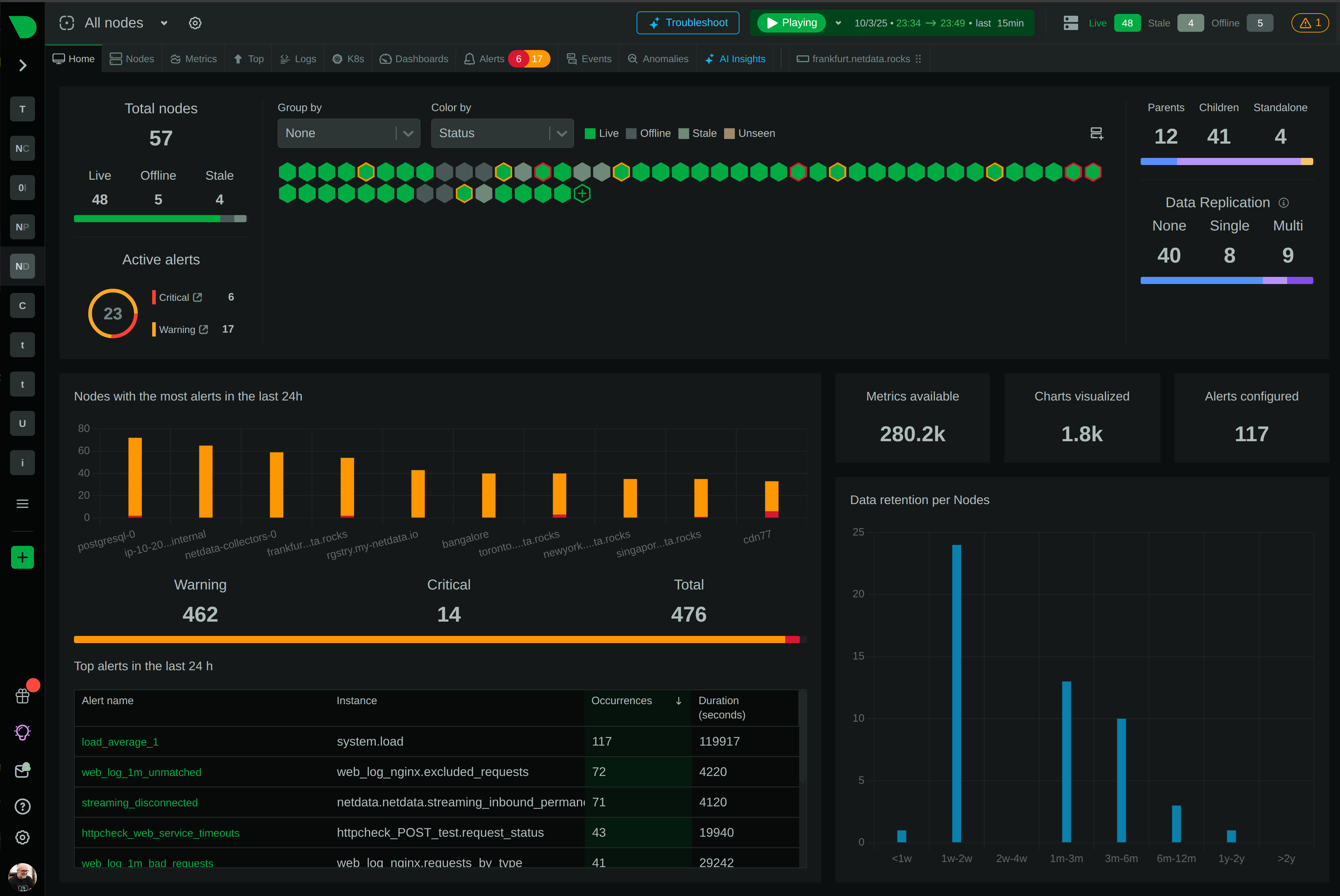

Monitoring Ping With Netdata

When it comes to monitoring ping, Netdata provides a comprehensive solution through its go.d.plugin with the ping module. This $name monitoring tool assesses network performance by measuring round-trip time (RTT) and packet loss, offering valuable insights into the health and reliability of your network connections. With Netdata, you can monitor ping in real-time, visualize trends, identify bottlenecks, and troubleshoot issues efficiently.

Why Is Ping Monitoring Important?

Monitoring ping is vital for maintaining network performance and ensuring reliable connectivity. By continually assessing ping metrics, you can detect latency issues, packet loss, and the overall responsiveness of your network, which are crucial for diagnosing the root cause of network-related problems. Effective ping monitoring helps prevent downtime and ensures superior application performance.

What Are The Benefits Of Using Ping Monitoring Tools?

Using tools for monitoring ping, like Netdata, provides comprehensive insights into network performance. Benefits include:

- Real-time monitoring: Continuously observe network latency and packet flow for quick issue detection.

- Detailed metrics: Get accurate data on RTT and packet loss for informed decision-making.

- Alerts and notifications: Receive timely alerts when performance thresholds are breached, allowing for proactive problem solving.

- Scalability and flexibility: Netdata supports multiple instances, making it adaptable to a wide range of network environments.

Understanding Ping Performance Metrics

- Ping Round-Trip Time (RTT): Measures the time taken for a signal to travel to the host and back. It’s a direct indicator of latency and network congestion.

- Ping Packet Loss: Indicates the percentage of packets lost during transmission, which impacts the stability of network communication.

- Ping Packets Transferred: Tracks the number of packets sent and received, offering insights into transmission efficiency.

- Ping Round-Trip Time Standard Deviation: Provides a statistical measure of the variability of RTT, helping to identify performance consistency.

| Metric Name | Description |

|---|---|

| ping.host_rtt | Ping round-trip time in milliseconds |

| ping.host_packet_loss | Percentage of packets lost |

| ping.host_packets | Number of ping packets transferred |

| ping.host_std_dev_rtt | Standard deviation of ping round-trip time |

Advanced Ping Performance Monitoring Techniques

Advanced monitoring techniques include setting up personalized alerts using Netdata to be notified instantly of any anomalies in network behavior. Additionally, employing Netdata’s troubleshooting module can help automate root cause analysis through its integrated health alarms and visual dashboards.

Diagnose Root Causes Or Performance Issues Using Key Ping Statistics & Metrics

Understanding key Ping metrics aids in diagnosing network issues quickly. For example, a sudden increase in RTT might indicate network congestion, while consistent packet loss could suggest link reliability issues. Netdata’s visualization tools provide an intuitive understanding of these metrics, paving the way for immediate corrective actions.

FAQs

What Is Ping Monitoring?

Ping monitoring involves tracking the performance and health of network connections by evaluating ping metrics such as RTT and packet loss.

Why Is Ping Monitoring Important?

It provides essential insights into network latency and reliability, helping identify issues that could impact application performance.

What Does A Ping Monitor Do?

A ping monitor tracks the latency and packet loss of ICMP Echo Requests, offering real-time data on network performance.

How Can I Monitor Ping In Real Time?

With Netdata, you can monitor ping in real-time using its intuitive dashboards and alerts. Start by Signing Up To Netdata for free.