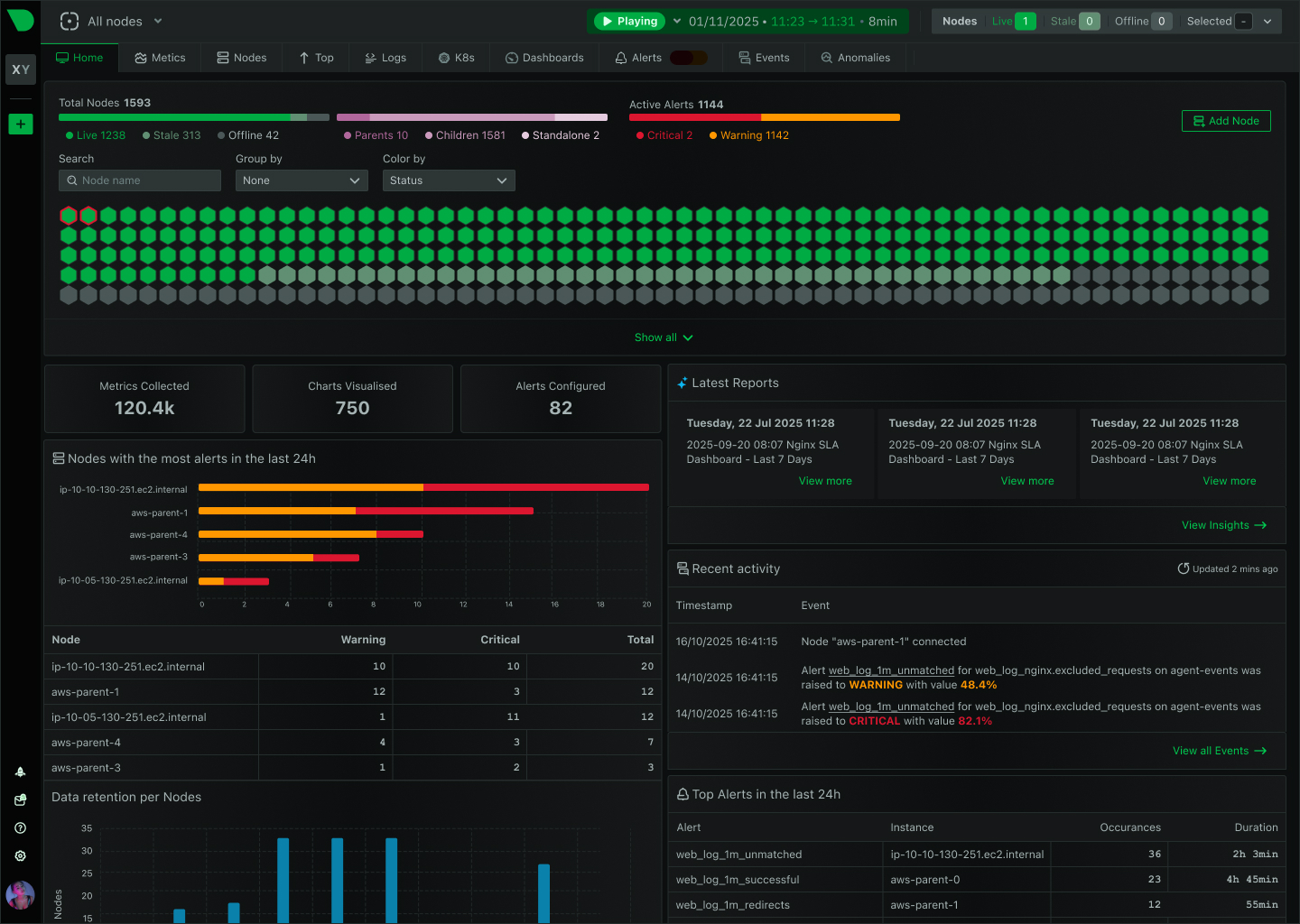

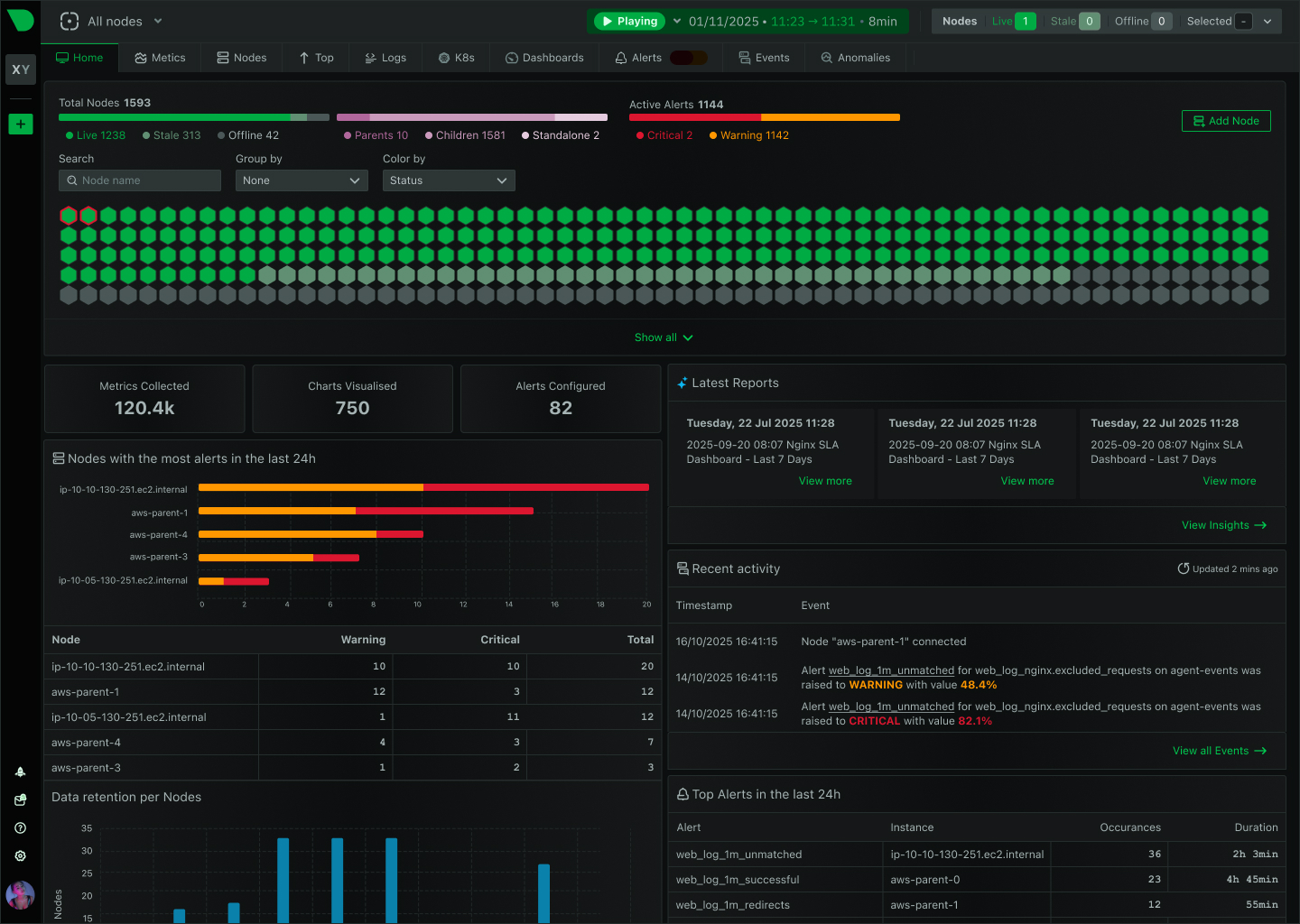

The Future of Infrastructure Observability

Netdata is evolving from infrastructure monitoring into a complete, AI-native observability platform. This page outlines our strategic direction and the investments we are making across the product.

Netdata is evolving from infrastructure monitoring into a complete, AI-native observability platform. This page outlines our strategic direction and the investments we are making across the product.

The four pillars guiding product development, engineering priorities, and platform decisions

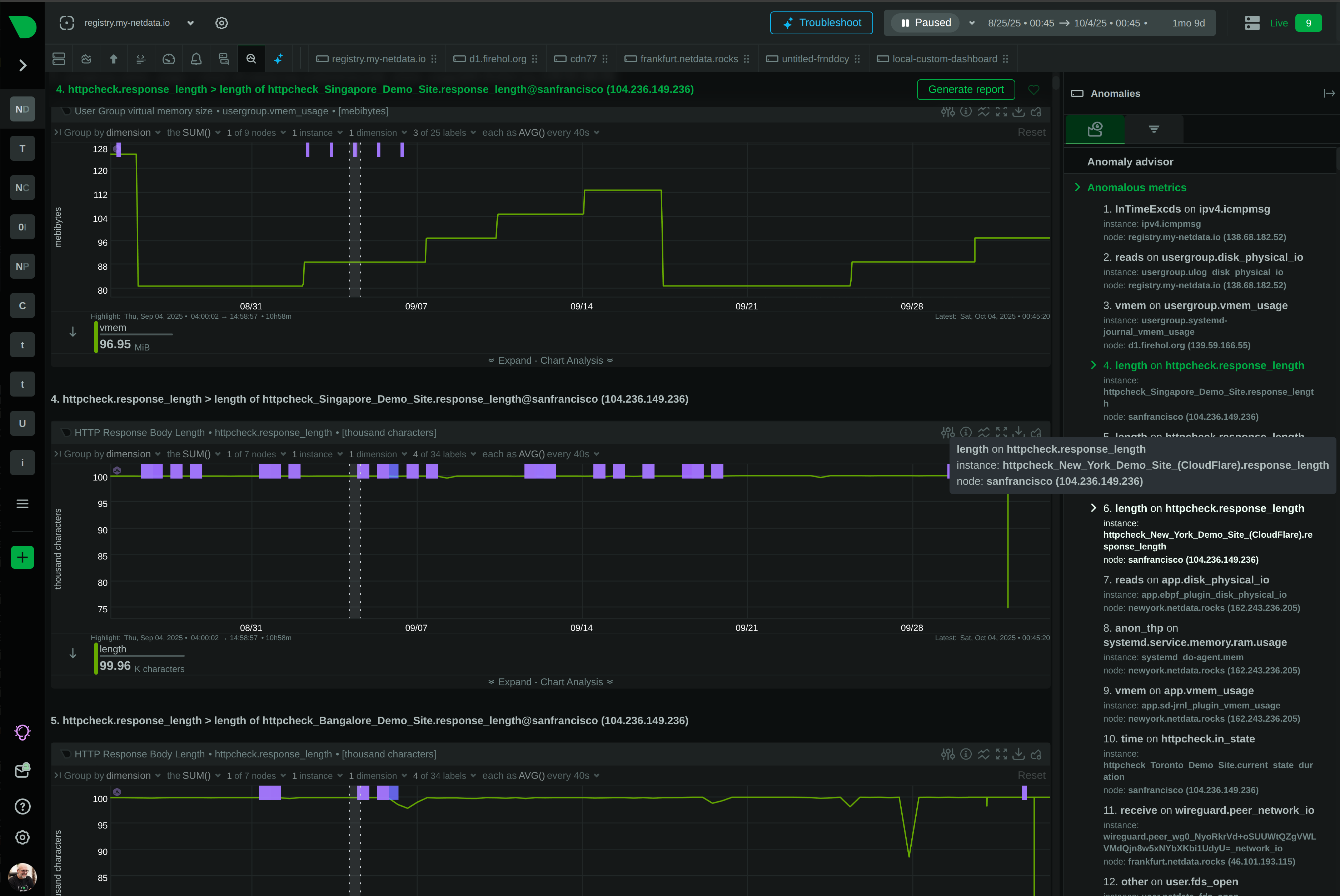

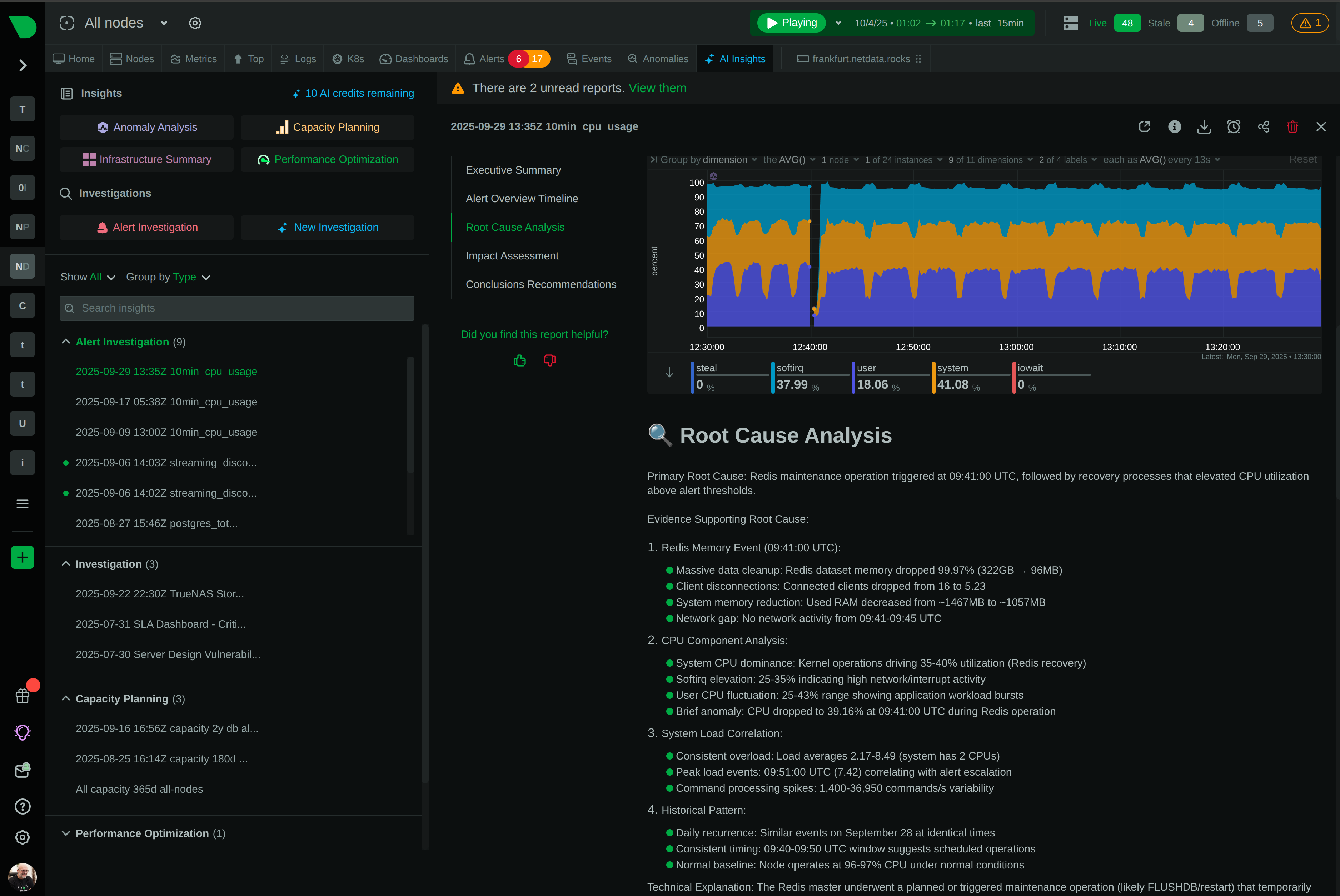

Extending unsupervised ML toward proactive anomaly surfacing, automated root cause analysis, and autonomous AI SRE capabilities that triage incidents and recommend remediation.

Building toward a single platform covering infrastructure metrics, application traces, network topology, and cloud service health with OpenTelemetry compliance across all signal types.

Investing in richer custom dashboards, cloud-evaluated alert aggregation, SLO/SLA compliance reporting, and deeper integration with incident management workflows.

Deepening support for external secret stores, expanding ITSM integrations (ServiceNow, PagerDuty), strengthening access controls, and hardening for air-gapped environments.

Expanding OpenTelemetry compatibility, Prometheus remote write, Grafana plugin capabilities, and third-party integrations so Netdata fits into any existing monitoring stack.

Continuing to push compute to the edge for predictable costs, low latency, and full data sovereignty while improving streaming protocols and parent scalability.

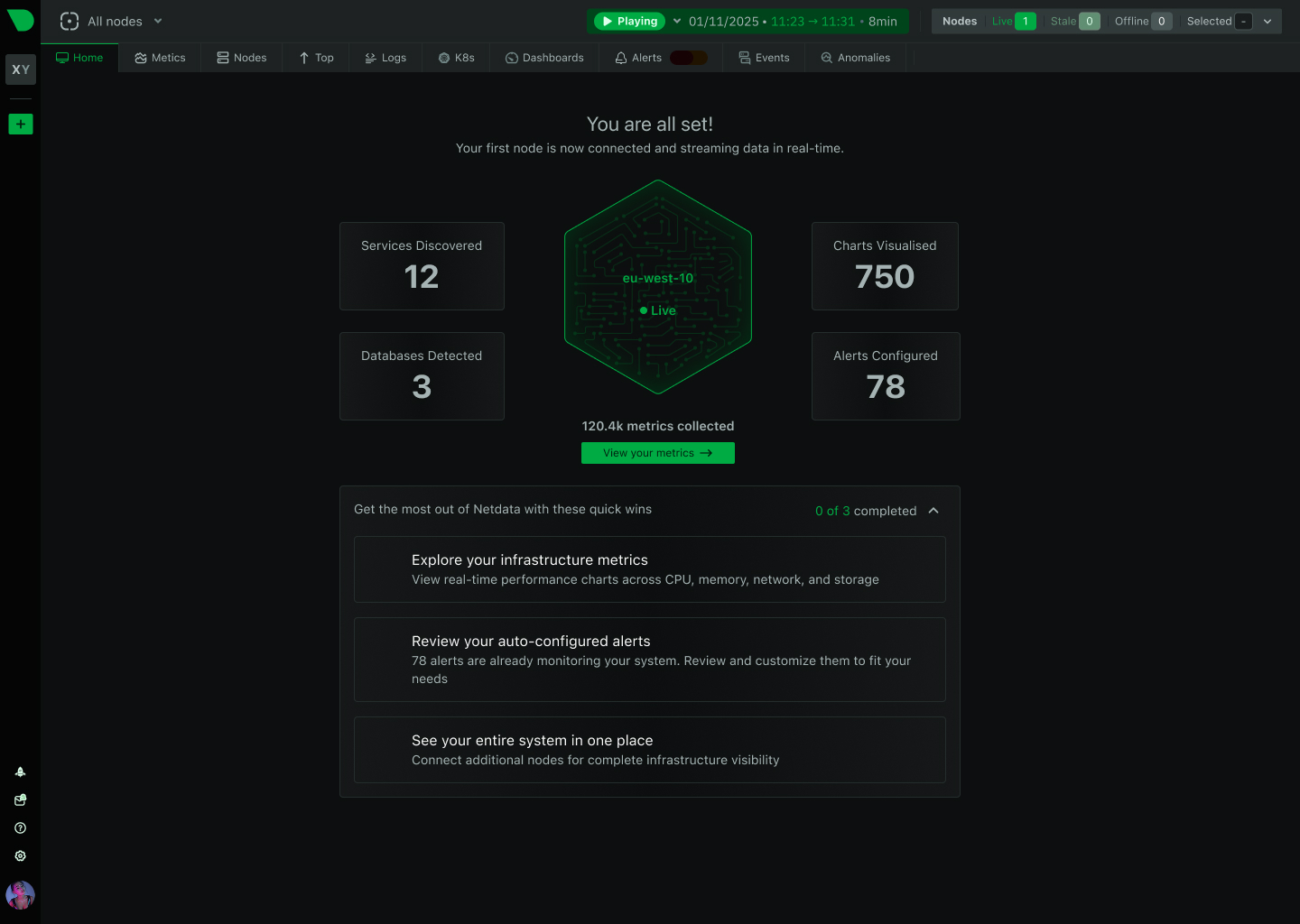

Trusted by organizations worldwide

Netdata already runs unsupervised ML on every collected metric. We are extending this foundation toward proactive anomaly surfacing, automated root cause analysis, and autonomous AI SRE capabilities.

18 ML models per metric, trained every 3 hours

Explore AI Features

Agent every 6 weeks, Cloud continuous

GitHub Releases

78,000+ GitHub stars, 615+ contributors

Join the Community

Direct access to the product team

Request a Briefing

The commitments that inform every product decision at Netdata

Every new capability auto-discovers, auto-configures, and delivers value out of the box. No setup wizards, no manual instrumentation.

We distribute compute to the edge instead of centralizing data. This keeps costs predictable, latency low, and data under your control.

The Netdata Agent, including the ML engine, database, and query engine, is licensed under GPLv3+. That commitment is permanent.

Monitoring should cost a fraction of the infrastructure it monitors. Our architecture eliminates the data pipelines and storage costs that make observability expensive.

Virtualized infrastructure demands high-resolution data. We collect at per-second granularity as a standard, available to every user.

Metrics stay on your infrastructure. Only dashboard metadata reaches the cloud. This is a design decision, built into the architecture.